Systemic Behavioral Design Is More Than Throwing Information And Counting On People’s Internal Factors.

Hi, Kevin here.

Recently I came along an interesting study on whether notifications about smartphone use effectively decrease screen time or have an impact on related behavior. In brief, the study concludes the effect of such notifications is either weak or inexistent. One might say that one study is not enough to make a proof (from a frequentist point of view), and I would certainly agree: without further successful replications, this study is “just” an interesting scientific clue.

However, this clue adds up to an existing list of evidence that point in the same direction, reinforcing a sounded & well-supported belief: informative messages on one's behavior lead to a rise in awareness, but awareness doesn't lead to a change of the said behavior. Intuitively, it's easy to think that the threshold between awareness of a potentially “not desirable” state and any corrective actions that one would undertake is small and depends largely on people's willingness to change. But this intuition is a false-belief, not correlated by most social sciences literature, studies, and observations: this is a fundamental attribution error.

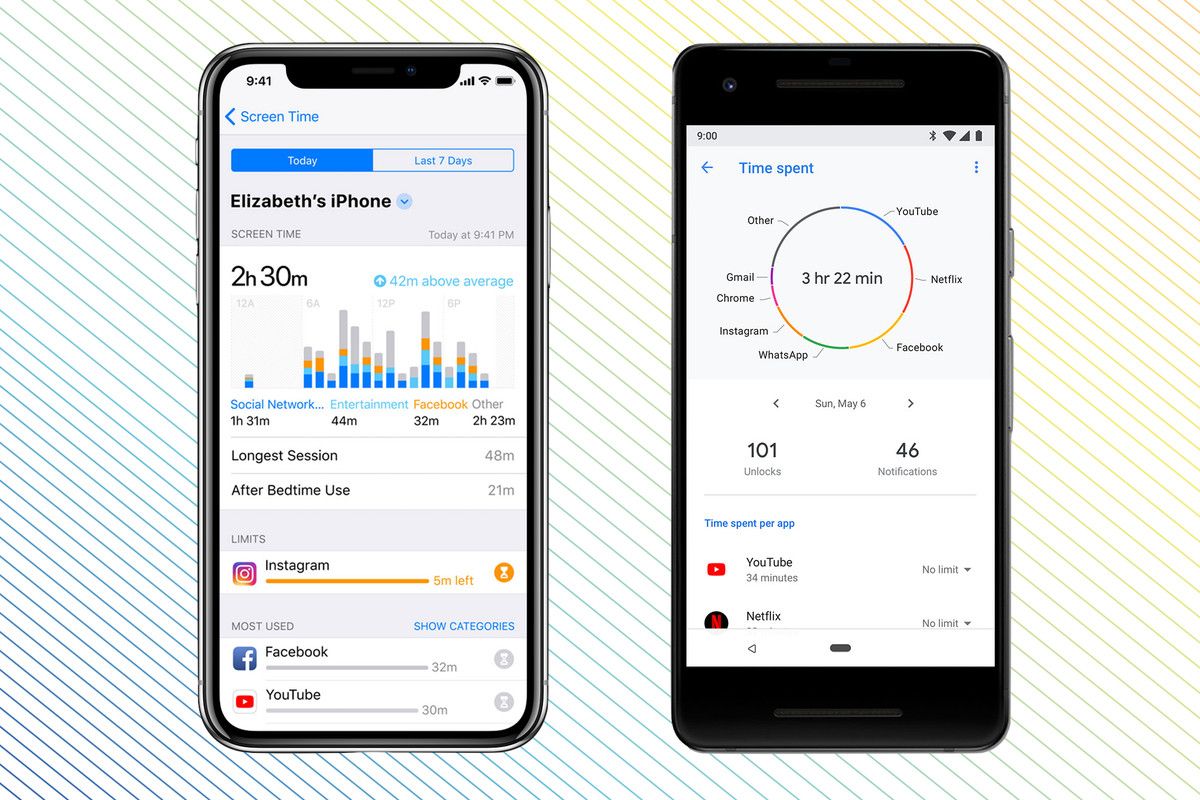

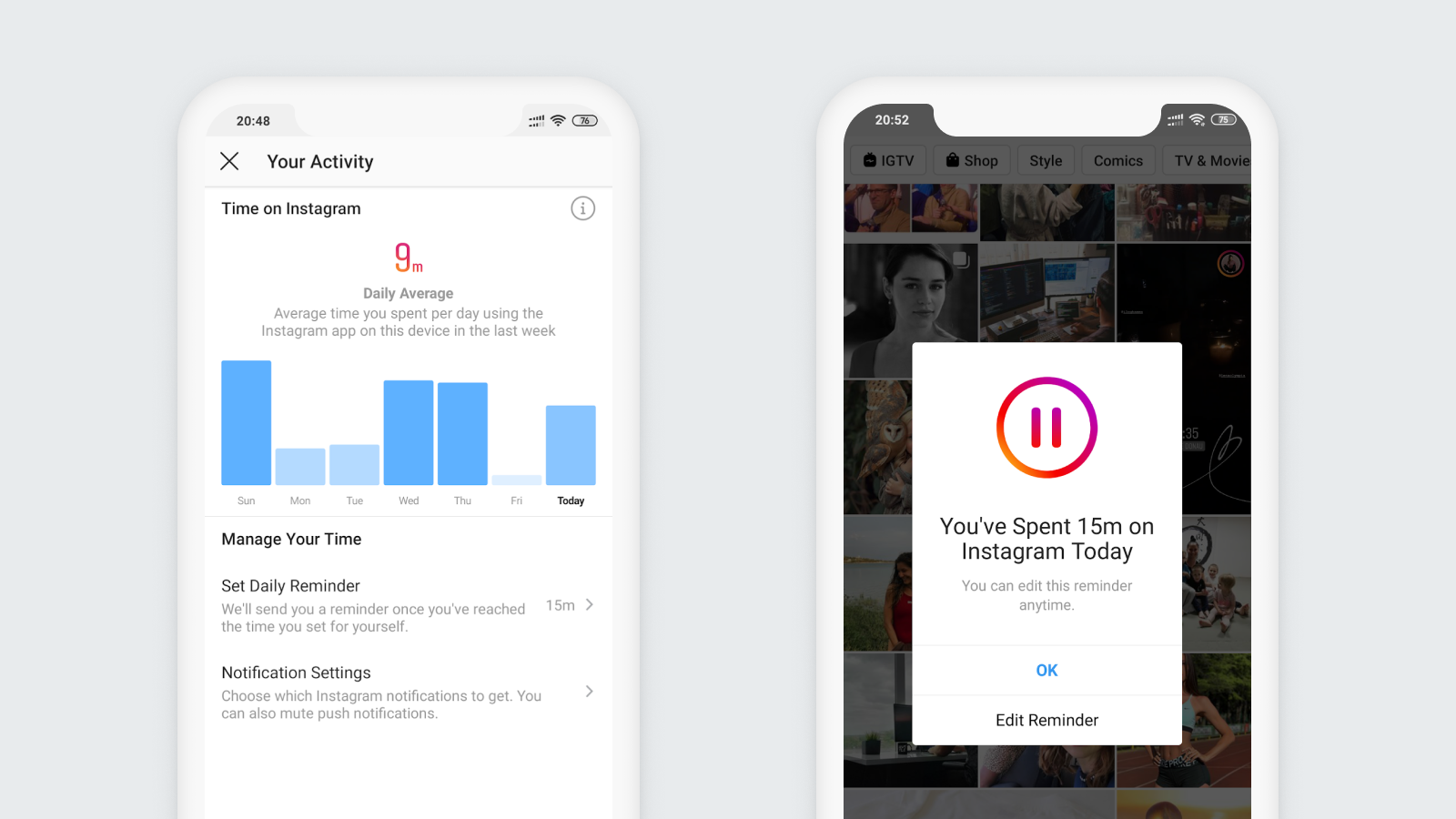

Yet, we can observe quite often design decisions taken from such “intuition” in our experiences. As seen in the study, the tentative of tech-giants to address the so-called “problematic smartphone use” with system updates supposed to help you “better control” your smartphone usage, mainly by making you more aware of your screen time use of each app. In most cases, the system allows you to define time limits and different other restrictions. Here, we fall in the previously described issue: the design assumes that the user's awareness will lead to a change of behavior.

Designing For Behavioral Change

When I say “awareness doesn't lead to action”, it's clearly a simplification. In fact, awareness could lead to action if some specific components are in place which allows and incite people to do so. To apprehend the subtle yet critical nuances, we have to take several things into consideration.

Behavior As Component Of Systemic Interactions

First, any given behavior does not exist in a vacuum or as an independent unit. Behaviors exist in larger contexts: they are part of a more or less complex systems of interconnected components, with certain boundaries. These systems and boundaries (i.e. cultural) are lead by individuals past and current experiences, groups dynamics, etc. All these interconnected components determine the likelihood of people's behaviors and reactions in a given context.

Here's a good example of predictability of a group behavior in a controlled environment (known known domain).

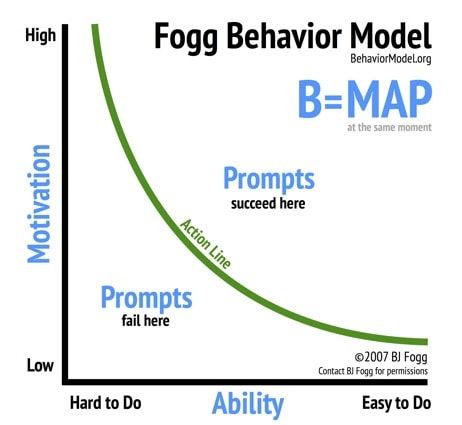

Mapping the main factors of a system is critical to understand its specificities and then allow to define what to adjust and when to change a behavior. Once mapped, these components can mainly fall into two categories: enablers and motivators. In his behavioral model (FMH), Dr. BJ Fogg talks about three main factors that must converge at the same moment for a behavior to occur: Motivation, Ability, and a Prompt.

As Fogg explains it, there are three core motivators, Sensation, Anticipation, and Belonging, with each of these has two sides: pleasure/pain, hope/fear, acceptance/rejection. There are also three paths to increasing ability:

- By increasing someone's skills (a.k.a. training people) therefore giving more ability to perform the target behavior. That’s the “hard path”, because “most people resist learning new things”.

- By giving someone the tool or resource to make the behavior easier to perform. For example, a cookbook makes cooking at home easier to do.

- By scaling back the target behavior to make it easier to achieve, in smaller baby-steps.

I already talked about Dr. BJ Fogg's model as well as Nir Eyal's one several times. What's important to note is that they are great to visually represent and understand such complex elements that are behaviors. However, they are certainly not “silverbullets” and can be reductionist if one's not willing to use them within a systemic approach. The risks and ethical responsibility are as high as the unintended consequences of any blind decision.

Secondly, even with all the adequate circumstances, a change does not occur by itself. An event (prompt) must happen, which can lead to a chain of reaction and can finally lead to reinforcing feedback loop, passing a tipping point and reaching a sustainable state (the behavior becomes the new norm). A funny yet effective example of such a phenomenon can be observed in the following video:

As you can see, external factors play a huge role (as much as internal ones) in explaining and anticipating behaviors. It's a balanced feedback loop between two systems: the person and the environment in which she evolves.

Interestingly, our understanding of behaviors, communities, societies, and cultures are (among other things) highly linked to our understanding of cognition and living beings (in general). As Dr. Francisco Varela explains in his work on the Embodied Cognition Theory and the concept of Autopoiesis:

“Every living being looks for balance within its environment (autopoiesis). Thus, behaviour emerges or results from a recursive process between perception (sensory organs), action (movement) and environment (in a broad sense) in which the being finds itself.

The attitude of an individual is guided by the principle of autopoeisis which is understood by the relationship between the subject and the means it disposes of (perception/action) in its environment.”

Act Upon The Components, Not Upon The System

Contrast Strategies: A Double-edged Sword

An effective way of taking advantage of a norm to trigger action from individuals is by using contrast. Contrast helps create an urge for action, by playing with a cognitive phenomenon called “cognitive dissonance”, by for instance showing a current state compared to the actual norm. This, therefore, creates a contrast.

For instance, Apple (unlike Google?) use it pretty well in their “Screen Time” tool on iOS, when they display “X minutes above average” next to your screen time counter. Another example is the use of “Fear Appeal Strategy”.

This is a double-edged sword because it might lead people to take corrective actions but at the same time is absolutely contextless.

- First, is the alleged norm (here an average) means anything? Is the norm actually equals positive outcomes and to whom (population, communities, etc.)?

- Is this norm apply to your context? For instance, a “journalist” or “community manager” is expected to use more his device. How does it impact his overall wellbeing?

- Is the current measure of what's desirable or not (the norm) is correct? In other words, is screen time the proper way to measure the impact of [digital technologies] on our life?

The (unintended) consequences behind the use of contrast can harm instead of solving problems:

- Creating contrast without effective actions to take can lead people to inappropriate actions to release their dissonance.

- (Excessive) focus on the metrics (or control) as an end goal.

- Misplaced fear about presumed outcomes or meaning.

- False-belief regarding the norm and its implication; or false-reference point.

- Stigmatization (or reinforcement of) of specific groups.

- Many more...

Contrast strategies (such as fear appeal) are pretty effective when causality is clearly established, meaning when components of a system and their relationship are known within a somehow “narrowed scope”. Here, the likelihood of unintended consequences is reduced to its lowest because whatever strategies cause a change in behavior, this change leads to positive outcomes that surpass the potential “sides effects” of the said strategies.

Screen time, however, is correlated to “problematic smartphone use” because people rely more on their digital devices to fulfill their “addictive behaviors”, therefore increasing the time spent on their device. This does not mean that high screen time causes or equates problematic use. Focusing on screen time as a way of preventing problematic use can deviate people from solutions (not necessarily related to smart-devices) that could actually yield greater impacts.

Ethics And Critical Thinking

Ethics in design, AI, and in general products & services is a hot topic. But I think people underestimate the deep bonds between ethic and critical thinking: questioning the what, why, how of our doings is a clear path to ethical considerations.

In this regard, I like Nir Eyal's “regret test”, a simple question that helps you judge the ethic of your decisions.

“If people knew everything the product designer knows, would they still execute the intended behavior? Are they likely to regret doing this?”

Shared Understanding & Participatory Design For Ethical Behavior Change

As I already talked about in my article, “Everyone Is A Designer, You're A Facilitator”, teams building solutions are composed of different individuals that are, at some point, taking design decisions. Things can become “dangerous” when this happens unconsciously, when the understanding of the “why, how, what” of the decisions are not explicitly shared. This leads to unintended, unethical, unfair solutions that have impacts on the systems they serve, creating potentially harmful situations for people and their environment.

Participatory Design approach and methods (also called co-Design, co-creation Design) can both provide a good way to create effective shared understanding and ensure more ethical design decisions. By making some design activities a team effort (i.e. user interviews, user tests, etc.) and by working with the people you aiming to serve to co-map contexts & needs and co-create solutions, you:

- Favor new team routines that create shared understanding as a by-product.

- Drive the team to better question the “why, how, what” and be critical.

- Create a shared representation of where people evolve and what they value.

- Enable & grow a culture of human-centered critical thinkers.

This is alignment, intentional, thoughtful, ethical, and human by design.

References

- Sarah Diefenbach “The Potential and Challenges of Digital Well-Being Interventions: Positive Technology Research and Design in Light of the Bitter-Sweet Ambivalence of Change” Front. Psychol., 2018

- T. Panova & al “Avoidance or boredom: Negative mental health outcomes associated with use of Information and Communication Technologies depend on users’ motivations”, Computers in Human Behavior, 2016 Elsevier.

- The American Psychiatric Association (APA) 2019, “Americans are Concerned about Potential Negative Impacts of Social Media on Mental Health and Well-being”.

- “How to run your first digital wellbeing design workshop” by Zsolt Szilvai, UX Collective July 2019.

- A. Roffarello & al “The Race Towards Digital Wellbeing: Issues and Opportunities” CHI Conference on Human Factors in Computing Systems Proceedings, 2019.

- “Fear Appeals: An approach used to change our attitudes and behaviors” by Shoba Sreenivasan, Psychology Today 2018.

- Fear Appeal, Wikipedia.

- Appeal to fear, Wikipedia.

- Appeal to emotion, Wikipedia.

- Tannenbaum & al. (2015) “Appealing to fear: A Meta-Analysis of Fear Appeal Effectiveness and Theories”.

- “Digital wellbeing is way more than just reducing screen time” by Kai Lukoff, July 2019, published in UX Collective.

- “Humans Don’t Realize How Biased They Are Until AI Reproduces the Same Bias, Says UNESCO AI Chair”, Shawe-Taylor interview in Synced, 2019.

Discussion